Introduction

This is the second part of our AI in Precision Medicine: From Data to Clinical Insight series. Check out Part 1: Modality-Specific Learning Explained first!

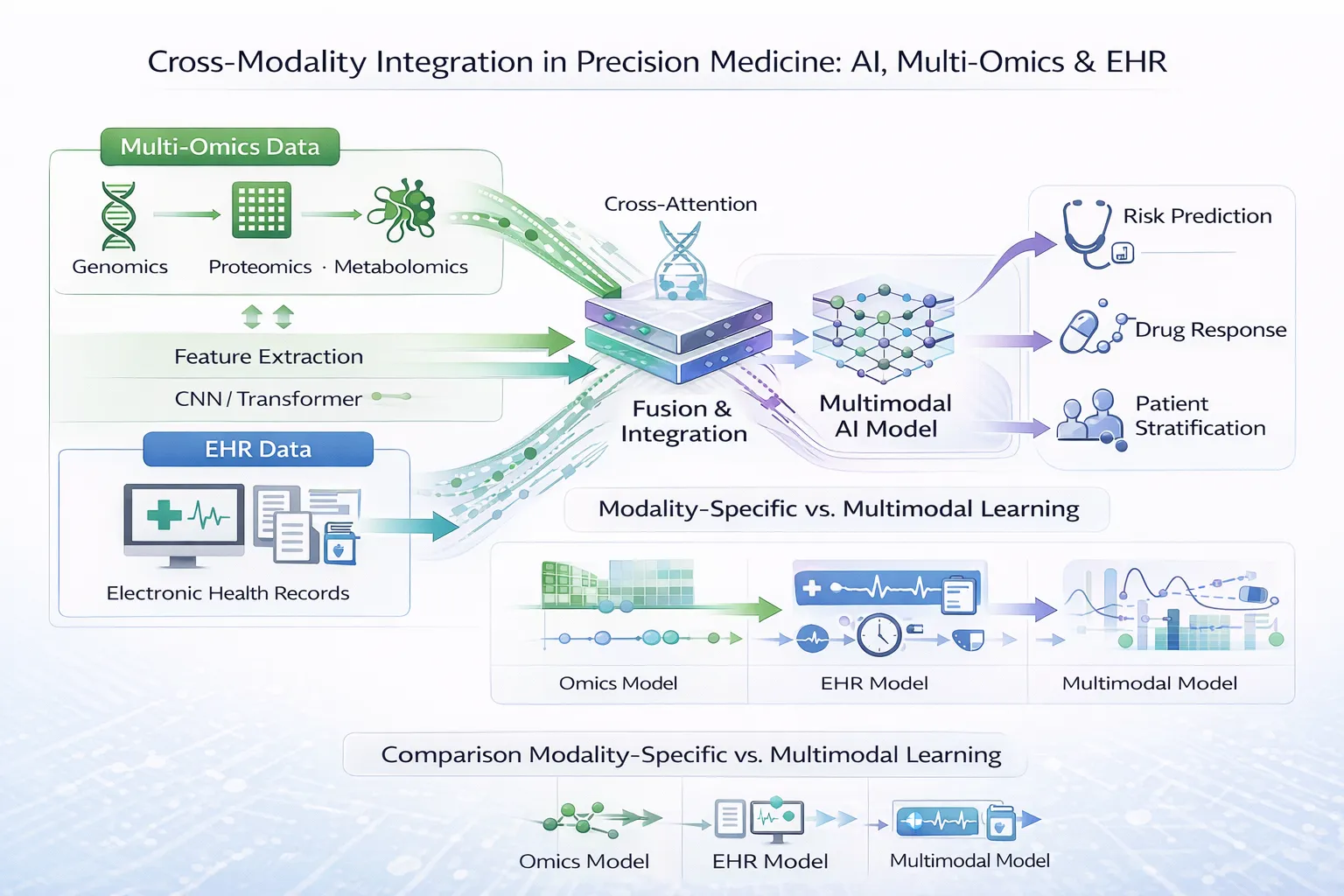

While modality-specific learning enables deep insights from individual data sources, the true promise of precision medicine lies in integrating heterogeneous datasets—linking molecular biology with real-world clinical outcomes.

Combining multi-omics data with electronic health records (EHRs) offers a more complete representation of disease, bridging mechanistic understanding with patient trajectories. However, this integration presents substantial computational, clinical, and ethical challenges.

In this article, we explore how cross-modality integration learning is transforming biomedical research—and the barriers that must be overcome for widespread clinical adoption.

Key Takeaways

- Cross-modality learning integrates molecular and clinical data for deeper insight

- AI models must align heterogeneous, high-dimensional datasets

- Integration improves prediction, diagnosis, and treatment selection

- Significant challenges remain in data quality, interpretability, and clinical implementation

Section 1: Cross-Modality Integration Learning

What Is Cross-Modality Integration?

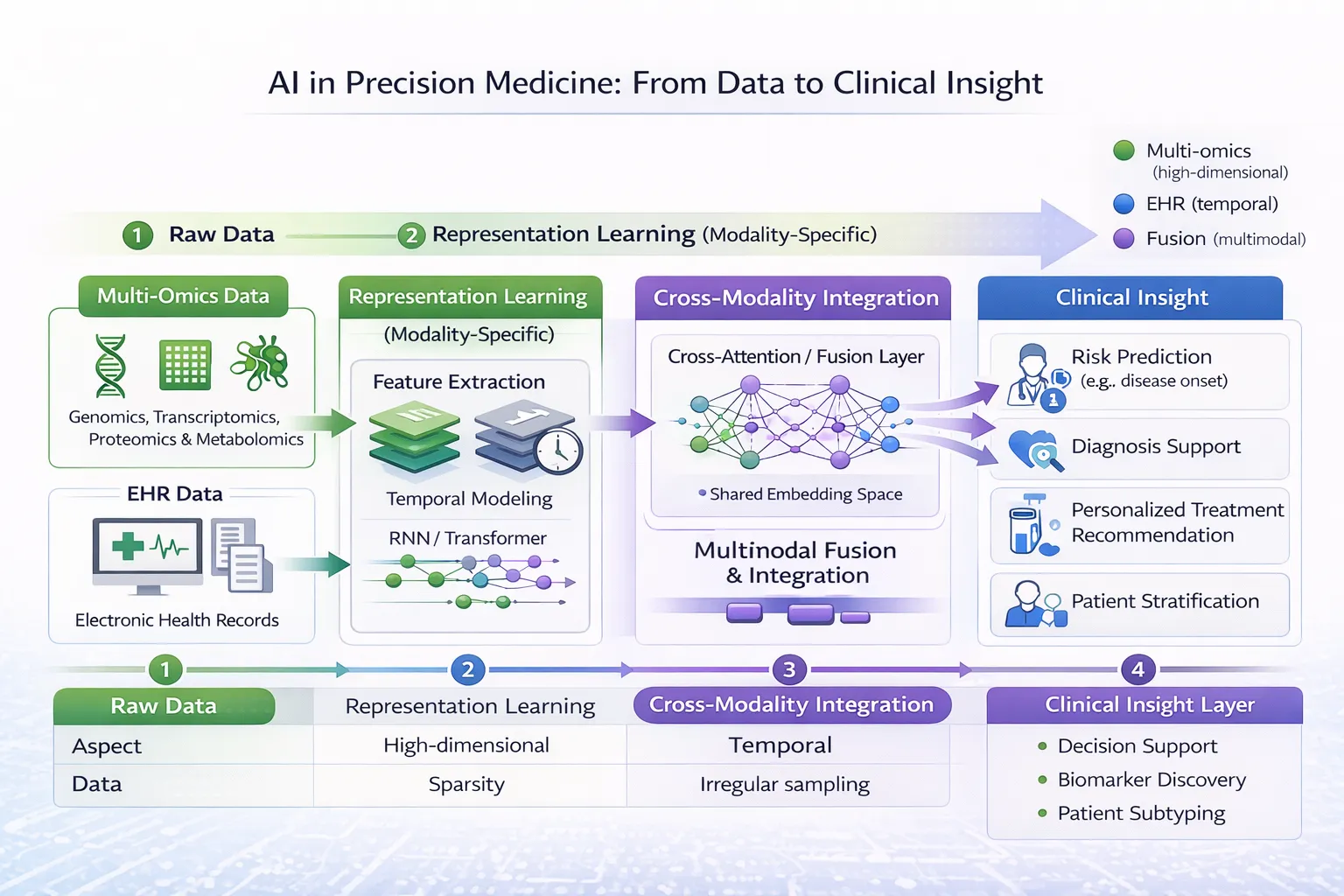

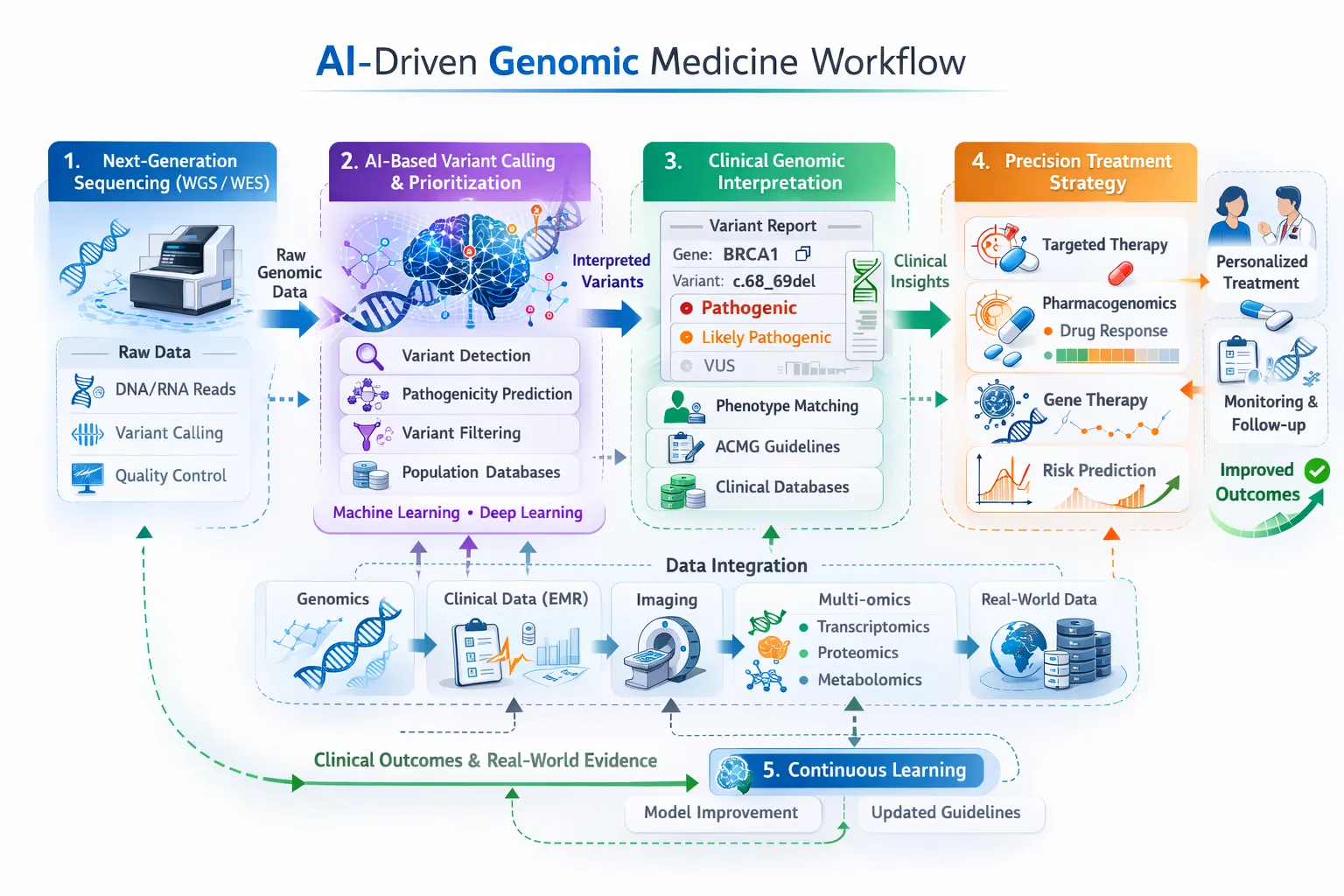

Cross-modality integration refers to the process of combining multiple data types—such as genomics, transcriptomics, and EHR data—into a unified analytical framework.

This approach enables:

- Linking genetic variation to clinical outcomes

- Connecting molecular mechanisms with disease progression

- Improving predictive accuracy through complementary data sources

Why Integration Matters

Individual modalities provide only partial insight:

- Multi-omics data reveals biological mechanisms

- EHR data captures clinical context and outcomes

When integrated, these datasets allow models to:

- Identify clinically relevant biomarkers

- Predict treatment response more accurately

- Enable truly personalised medicine

Clinical example: In oncology, combining genomic mutations with clinical history improves prediction of treatment response and survival outcomes, outperforming single-modality models¹.

Approaches to Cross-Modality Integration

1. Early Integration (Feature-Level Fusion)

Data from multiple modalities is combined at the input stage:

- Features are concatenated into a single dataset

- A unified model is trained on the combined feature space

Advantages:

- Simple implementation

- Captures interactions between modalities early

Limitations:

- Sensitive to noise and missing data

- Difficult to scale with high-dimensional datasets

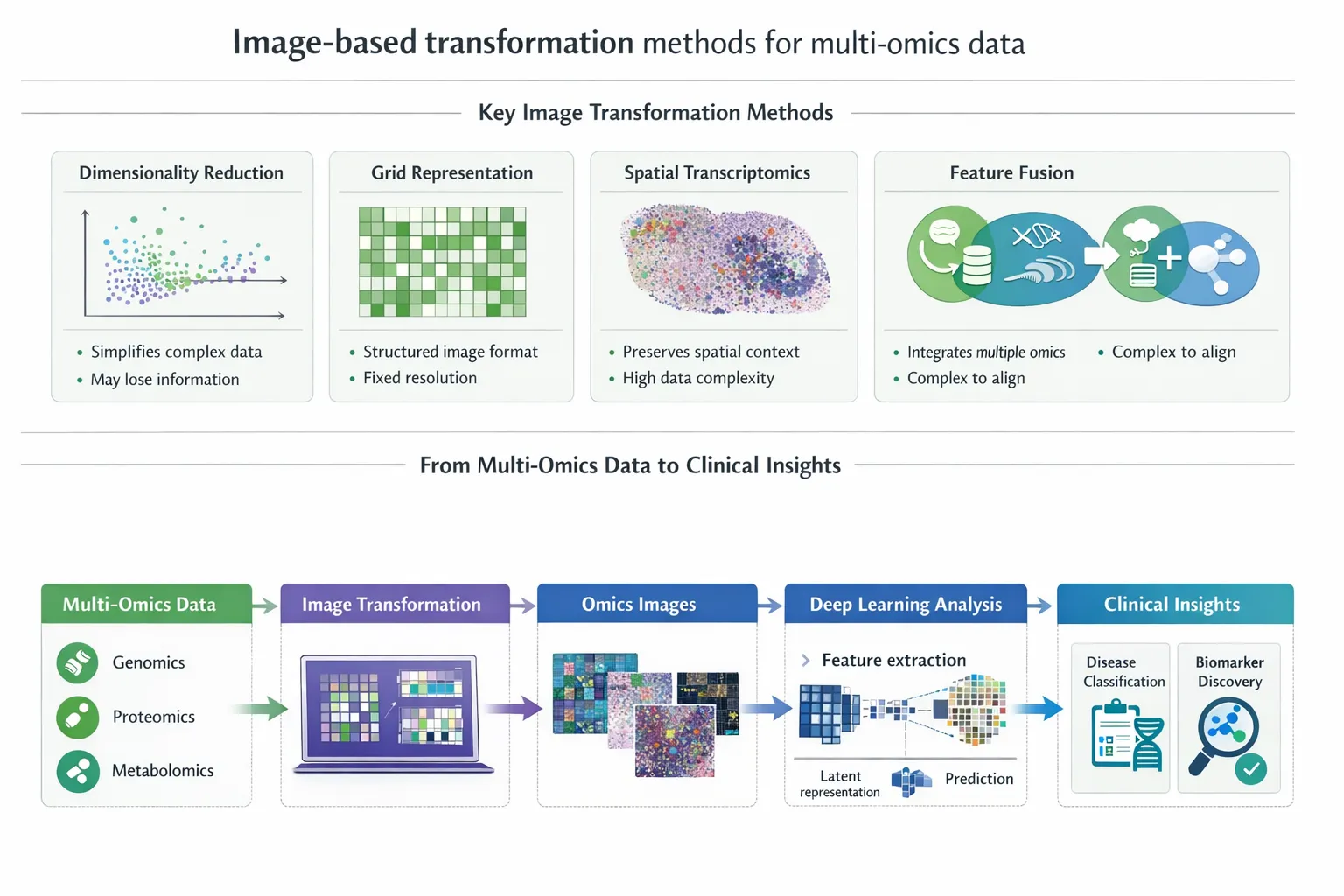

2. Intermediate Integration (Representation-Level Fusion)

Each modality is first processed independently to learn latent representations, which are then combined.

- Multi-omics embeddings (e.g., via autoencoders)

- EHR embeddings (e.g., via transformers)

- Fusion through shared latent space

Advantages:

- Reduces dimensionality

- Improves robustness

- Preserves modality-specific structure

This is one of the most widely used approaches in modern AI systems.

3. Late Integration (Decision-Level Fusion)

Separate models are trained on each modality, and their outputs are combined:

- Ensemble methods

- Weighted predictions

Advantages:

- Flexible and modular

- Handles missing modalities well

Limitations:

- May miss deeper cross-modal interactions

4. Advanced Deep Learning Architectures

Emerging models include:

- Multimodal transformers

- Graph neural networks (GNNs) integrating biological networks with clinical data

- Attention mechanisms to prioritise relevant features across modalities

These approaches enable more sophisticated modelling of complex biological and clinical relationships².

Clinical Applications of Cross-Modality Integration

1. Precision Oncology

- Integrating tumor genomics with clinical records

- Predicting response to targeted therapies

2. Rare Disease Diagnosis

- Combining genomic sequencing with clinical phenotypes

- Improving diagnostic yield in undiagnosed patients

3. Cardiovascular Risk Prediction

- Integrating polygenic risk scores with lifestyle and clinical data

4. Drug Discovery and Repurposing

- Linking molecular pathways with real-world patient outcomes

Section 2: Challenges in Cross-Modality Integration

Despite its promise, cross-modality learning faces significant barriers.

1. Data Heterogeneity

Different modalities vary in:

- Scale (molecular vs clinical)

- Structure (tabular, sequence, text)

- Distribution

Aligning these datasets into a unified framework remains a major technical challenge.

2. Missing and Incomplete Data

Not all patients have:

- Complete multi-omics profiles

- Fully documented clinical records

This leads to:

- Biased datasets

- Reduced model generalisability

3. High Dimensionality

Combining datasets further increases dimensionality, compounding:

- Overfitting risk

- Computational complexity

4. Interpretability

Clinicians require:

- Transparent models

- Explainable predictions

However, many multimodal AI models operate as “black boxes”, limiting clinical trust and adoption.

5. Data Privacy and Governance

Healthcare data is highly sensitive, raising concerns around:

- Patient privacy

- Data sharing across institutions

- Regulatory compliance

Federated learning and secure data-sharing frameworks are emerging solutions.

6. Computational and Infrastructure Constraints

Cross-modality models require:

- Large-scale computing resources

- Advanced data pipelines

- Interoperable systems

These requirements can limit adoption in real-world healthcare settings.

Section 3: Opportunities and Future Directions

Despite these challenges, the field is advancing rapidly.

1. Improved Multimodal AI Models

Next-generation architectures are:

- More robust to missing data

- Better at capturing cross-modal relationships

- Increasingly interpretable

2. Federated and Privacy-Preserving Learning

New approaches allow models to be trained across institutions without sharing raw data, improving:

- Data access

- Privacy protection

3. Standardisation of Biomedical Data

Efforts to standardise:

- Clinical coding systems

- Omics data formats

will improve interoperability and model performance.

4. Integration into Clinical Workflows

AI-driven multimodal systems are beginning to:

- Support clinical decision-making

- Assist in diagnosis and prognosis

- Guide personalised treatment

5. Toward Digital Twins in Medicine

A long-term vision is the creation of digital patient models, integrating:

- Genomic data

- Clinical history

- Environmental factors

These “digital twins” could simulate disease progression and treatment outcomes in silico.

Clinical Relevance

For clinicians, cross-modality integration offers:

- More accurate diagnosis through combined molecular and clinical data

- Better risk stratification and prognosis

- Improved treatment selection and monitoring

However, careful interpretation, validation, and integration into workflows are essential for safe and effective use.

Conclusion

Cross-modality integration represents the next frontier in precision medicine. By combining multi-omics data with electronic health records, AI models can provide a more comprehensive understanding of disease than ever before.

Yet, significant challenges remain—from data heterogeneity to model interpretability and clinical implementation. Addressing these barriers will be critical to unlocking the full potential of integrated biomedical data.

As research progresses, cross-modality learning is poised to transform healthcare—moving from data-rich systems to truly insight-driven, personalised medicine.